本文通过完整的工程实现,展示了从算法部署到系统集成的完整流程。实际测试表明,该系统在Jetson Nano上可达:

Linux之父27年前写的一段代码

有程序员网友曝出了莱纳斯•托瓦尔兹(Linus Torvalds) 1991 年公开的 Linux 源代码,这引起了 W3Cschool 的注意,可以研究一下这位大神的代码:

源码:

/

system_call.s contains the system-call low-level handling routines.

This also contains the timer-interrupt handler, as some of the code is

the same. The hd-interrupt is also here.

NOTE: This code handles signal-recognition, which happens every time

after a timer-interrupt and after each system call. Ordinary interrupts

don't handle signal-recognition, as that would clutter them up totally

unnecessarily.

Stack layout in 'ret_from_system_call':

0(%esp) - %eax

4(%esp) - %ebx

8(%esp) - %ecx

C(%esp) - %edx

10(%esp) - %fs

14(%esp) - %es

18(%esp) - %ds

1C(%esp) - %eip

20(%esp) - %cs

24(%esp) - %eflags

28(%esp) - %oldesp

2C(%esp) - %oldss

/

SIG_CHLD = 17

EAX = 0x00

EBX = 0x04

ECX = 0x08

EDX = 0x0C

FS = 0x10

ES = 0x14

DS = 0x18

EIP = 0x1C

CS = 0x20

EFLAGS = 0x24

OLDESP = 0x28

OLDSS = 0x2C

state = 0 # these are offsets into the task-struct.

counter = 4

priority = 8

signal = 12

restorer = 16 # address of info-restorer

sig_fn = 20 # table of 32 signal addresses

nr_system_calls = 67

.globl _system_call,_sys_fork,_timer_interrupt,_hd_interrupt,_sys_execve

.align 2

bad_sys_call:

movl $-1,%eax

iret

.align 2

reschedule:

pushl $ret_from_sys_call

jmp _schedule

.align 2

_system_call:

cmpl $nr_system_calls-1,%eax

ja bad_sys_call

push %ds

push %es

push %fs

pushl %edx

pushl %ecx # push %ebx,%ecx,%edx as parameters

pushl %ebx # to the system call

movl $0x10,%edx # set up ds,es to kernel space

mov %dx,%ds

mov %dx,%es

movl $0x17,%edx # fs points to local data space

mov %dx,%fs

call _sys_call_table(,%eax,4)

pushl %eax

movl _current,%eax

cmpl $0,state(%eax) # state

jne reschedule

cmpl $0,counter(%eax) # counter

je reschedule

ret_from_sys_call:

movl _current,%eax # task[0] cannot have signals

cmpl _task,%eax

je 3f

movl CS(%esp),%ebx # was old code segment supervisor

testl $3,%ebx # mode? If so - don't check signals

je 3f

cmpw $0x17,OLDSS(%esp) # was stack segment = 0x17 ?

jne 3f

2: movl signal(%eax),%ebx # signals (bitmap, 32 signals)

bsfl %ebx,%ecx # %ecx is signal nr, return if none

je 3f

btrl %ecx,%ebx # clear it

movl %ebx,signal(%eax)

movl sig_fn(%eax,%ecx,4),%ebx # %ebx is signal handler address

cmpl $1,%ebx

jb default_signal # 0 is default signal handler - exit

je 2b # 1 is ignore - find next signal

movl $0,sig_fn(%eax,%ecx,4) # reset signal handler address

incl %ecx

xchgl %ebx,EIP(%esp) # put new return address on stack

subl $28,OLDESP(%esp)

movl OLDESP(%esp),%edx # push old return address on stack

pushl %eax # but first check that it's ok.

pushl %ecx

pushl $28

pushl %edx

call _verify_area

popl %edx

addl $4,%esp

popl %ecx

popl %eax

movl restorer(%eax),%eax

movl %eax,%fs:(%edx) # flag/reg restorer

movl %ecx,%fs:4(%edx) # signal nr

movl EAX(%esp),%eax

movl %eax,%fs:8(%edx) # old eax

movl ECX(%esp),%eax

movl %eax,%fs:12(%edx) # old ecx

movl EDX(%esp),%eax

movl %eax,%fs:16(%edx) # old edx

movl EFLAGS(%esp),%eax

movl %eax,%fs:20(%edx) # old eflags

movl %ebx,%fs:24(%edx) # old return addr

3: popl %eax

popl %ebx

popl %ecx

popl %edx

pop %fs

pop %es

pop %ds

iret

default_signal:

incl %ecx

cmpl $SIG_CHLD,%ecx

je 2b

pushl %ecx

call _do_exit # remember to set bit 7 when dumping core

addl $4,%esp

jmp 3b

.align 2

_timer_interrupt:

push %ds # save ds,es and put kernel data space

push %es # into them. %fs is used by _system_call

push %fs

pushl %edx # we save %eax,%ecx,%edx as gcc doesn't

pushl %ecx # save those across function calls. %ebx

pushl %ebx # is saved as we use that in ret_sys_call

pushl %eax

movl $0x10,%eax

mov %ax,%ds

mov %ax,%es

movl $0x17,%eax

mov %ax,%fs

incl _jiffies

movb $0x20,%al # EOI to interrupt controller #1

outb %al,$0x20

movl CS(%esp),%eax

andl $3,%eax # %eax is CPL (0 or 3, 0=supervisor)

pushl %eax

call _do_timer # 'do_timer(long CPL)' does everything from

addl $4,%esp # task switching to accounting ...

jmp ret_from_sys_call

.align 2

_sys_execve:

lea EIP(%esp),%eax

pushl %eax

call _do_execve

addl $4,%esp

ret

.align 2

_sys_fork:

call _find_empty_process

testl %eax,%eax

js 1f

push %gs

pushl %esi

pushl %edi

pushl %ebp

pushl %eax

call _copy_process

addl $20,%esp

1: ret

_hd_interrupt:

pushl %eax

pushl %ecx

pushl %edx

push %ds

push %es

push %fs

movl $0x10,%eax

mov %ax,%ds

mov %ax,%es

movl $0x17,%eax

mov %ax,%fs

movb $0x20,%al

outb %al,$0x20 # EOI to interrupt controller #1

jmp 1f # give port chance to breathe

1: jmp 1f

1: outb %al,$0xA0 # same to controller #2

movl _do_hd,%eax

testl %eax,%eax

jne 1f

movl $_unexpected_hd_interrupt,%eax

1: call %eax # "interesting" way of handling intr.

pop %fs

pop %es

pop %ds

popl %edx

popl %ecx

popl %eax

iretLinux之父

托瓦尔兹 1969 年 12 月 28 日出生于芬兰赫尔辛基市,父母都是记者。他从小就对计算机感兴趣。1988 年他进入赫尔辛基大学学习,专业为计算机科学。1991 年,他购买了一台属于自己的 PC 机。赫尔辛基大学当时采用Unix操作系统,托瓦尔兹觉得该产品性能不尽如人意,于是就尝试着自己编写一款操作系统内核,这就是 Linux 操作系统来源。

1997 年至 2003 年,托瓦尔兹在美国加州全美达(Transmeta)公司工作。2003 年 7 月,他加盟“开放源代码开发实验室”(OSDL),以全力开发 Linux 内核。后来 OSDL 与“免费标准集团”(FSG)合并成立了 Linux 基金会。托瓦尔兹如今仍在 Linux 基金会工作。与其他 IT 明星人物所不同的是,托瓦兹平常行事较为低调,一般很少在公开场合评论商业竞争对手产品的好坏。

学编程,从w3cschool开始,w3cschool推出的编程微课,能够帮助新手更快地学会一门语言,采用的是一题一练,边学边练的高效学习模式!

有兴趣的童鞋的尝试一下!W3Cschool编程微课

【BLIP】解读BLIP

主流的多模态模型,基本分为两种:基于encoder和基于encoder-decoder。两者都存在一定的劣势,前者不能完成文本生成任务,例如图像字幕生成,而后者基本没有在图像-文本检索的任务上成功过。

Java之父22年前写的一段代码

[Listing One]PingPong class PingPong extends Thread { String word; // what word to print int delay; // how long to pause

PingPong(String whatToSay, int delayTime) { word = whatToSay; delay = delayTime; } public void run() { try { for (;;) { System.out.print(word + " "); sleep(delay); // wait until next time } } catch (InterruptedException e) { return; // end this thread } } public static void main(String[] args) { new PingPong("ping", 33).start(); // 1/30 second new PingPong("PONG", 100).start(); // 1/10 second }}

[Listing Two]

Account class Account {

private double balance;public Account(double initialDeposit) { balance = initialDeposit; } public synchronized double getBalance() { return balance; } public synchronized void deposit(double amount) { balance += amount; }}

[Listing Three]

synchronized_abs /* make all elements in the array nonnegative /

public static void abs(int[] values) {

synchronized (values) {

for (int i = 0; i < values.length; i++) {

if (values[i] < 0)

values[i] = -values[i];

}

}

}[Listing Four]

class Queue {

// The first and last elements in the queue

Element head, tail;public synchronized void append(Element p) { if (tail == null) head = p; else tail.next = p; p.next = null; tail = p; notify(); // Let waiters know something arrived } public synchronized Element get() { try { while(head == null) wait(); // Wait for an element } catch (InterruptedException e) { return null; } Element p = head; // Remember first element head = head.next; // Remove it from the queue if (head == null) // Check for an empty queue tail = null; return p; }}

[Listing Five]

Thread spinner; // the thread doing the processing

public void userHitCancel() {

spinner.suspend(); // whoa!

if (askYesNo("Really Cancel?"))

spinner.stop(); // stop it

else

spinner.resume(); // giddyap!

}[Listing Six]

class CalcThread extends Thread {

private double Result;public void run() { Result = calculate(); } public double result() { return Result; } public double calculate() { // ... }}

class Join {

public static void main(String[] args) {

CalcThread calc = new CalcThread();

calc.start();

doSomethingElse();

try {

calc.join();

System.out.println("result is "+ calc.result()); } catch (InterruptedException e) { System.out.println("No answer: interrupted"); } }}

学编程,从w3cschool开始,w3cschool推出的编程微课,采用游戏化的编程闯关模式,让你快速掌握编程这一门手艺。微课学习入口:W3Cschool编程微课

C# 14 新增功能一览,你觉得实用吗?

要体验 C# 14 中的新增功能,你需要安装最新的 Visual Studio 2022 版本或下载 .NET 10 SDK。

谷歌Pixel 2人像模式代码曝光,你看懂了吗?

谷歌把他们所应用的 AI 图像分层算法 DeepLab-v3+ 变成开源代码,让第三方相机 app 都可以利用借此神经网络。

开源代码:

import tensorflow as tf

from deeplab.core import feature_extractor

slim = tf.contrib.slim

_LOGITS_SCOPE_NAME = ‘logits’

_MERGED_LOGITS_SCOPE = ‘merged_logits’

_IMAGE_POOLING_SCOPE = ‘image_pooling’

_ASPP_SCOPE = ‘aspp’

_CONCAT_PROJECTION_SCOPE = ‘concat_projection’

_DECODER_SCOPE = ‘decoder’

def get_extra_layer_scopes():

“””Gets the scopes for extra layers.

Returns:

A list of scopes for extra layers.

“””

return [

_LOGITS_SCOPE_NAME,

_IMAGE_POOLING_SCOPE,

_ASPP_SCOPE,

_CONCAT_PROJECTION_SCOPE,

_DECODER_SCOPE,

]

def predict_labels_multi_scale(images,

model_options,

eval_scales=(1.0,),

add_flipped_images=False):

“””Predicts segmentation labels.

Args:

images: A tensor of size [batch, height, width, channels].

model_options: A ModelOptions instance to configure models.

eval_scales: The scales to resize images for evaluation.

add_flipped_images: Add flipped images for evaluation or not.

Returns:

A dictionary with keys specifying the output_type (e.g., semantic

prediction) and values storing Tensors representing predictions (argmax

over channels). Each prediction has size [batch, height, width].

“””

outputs_to_predictions = {

output: []

for output in model_options.outputs_to_num_classes

}

for i, image_scale in enumerate(eval_scales):

with tf.variable_scope(tf.get_variable_scope(), reuse=True if i else None):

outputs_to_scales_to_logits = multi_scale_logits(

images,

model_options=model_options,

image_pyramid=[image_scale],

is_training=False,

fine_tune_batch_norm=False)

if add_flipped_images:

with tf.variable_scope(tf.get_variable_scope(), reuse=True):

outputs_to_scales_to_logits_reversed = multi_scale_logits(

tf.reverse_v2(images, [2]),

model_options=model_options,

image_pyramid=[image_scale],

is_training=False,

fine_tune_batch_norm=False)

for output in sorted(outputs_to_scales_to_logits):

scales_to_logits = outputs_to_scales_to_logits[output]

logits = tf.image.resize_bilinear(

scales_to_logits[_MERGED_LOGITS_SCOPE],

tf.shape(images)[1:3],

align_corners=True)

outputs_to_predictions[output].append(

tf.expand_dims(tf.nn.softmax(logits), 4))

if add_flipped_images:

scales_to_logits_reversed = (

outputs_to_scales_to_logits_reversed[output])

logits_reversed = tf.image.resize_bilinear(

tf.reverse_v2(scales_to_logits_reversed[_MERGED_LOGITS_SCOPE], [2]),

tf.shape(images)[1:3],

align_corners=True)

outputs_to_predictions[output].append(

tf.expand_dims(tf.nn.softmax(logits_reversed), 4))

for output in sorted(outputs_to_predictions):

predictions = outputs_to_predictions[output]

# Compute average prediction across different scales and flipped images.

predictions = tf.reduce_mean(tf.concat(predictions, 4), axis=4)

outputs_to_predictions[output] = tf.argmax(predictions, 3)

return outputs_to_predictions

def predict_labels(images, model_options, image_pyramid=None):

“””Predicts segmentation labels.

Args:

images: A tensor of size [batch, height, width, channels].

model_options: A ModelOptions instance to configure models.

image_pyramid: Input image scales for multi-scale feature extraction.

Returns:

A dictionary with keys specifying the output_type (e.g., semantic

prediction) and values storing Tensors representing predictions (argmax

over channels). Each prediction has size [batch, height, width].

“””

outputs_to_scales_to_logits = multi_scale_logits(

images,

model_options=model_options,

image_pyramid=image_pyramid,

is_training=False,

fine_tune_batch_norm=False)

predictions = {}

for output in sorted(outputs_to_scales_to_logits):

scales_to_logits = outputs_to_scales_to_logits[output]

logits = tf.image.resize_bilinear(

scales_to_logits[_MERGED_LOGITS_SCOPE],

tf.shape(images)[1:3],

align_corners=True)

predictions[output] = tf.argmax(logits, 3)

return predictions

def scale_dimension(dim, scale):

“””Scales the input dimension.

Args:

dim: Input dimension (a scalar or a scalar Tensor).

scale: The amount of scaling applied to the input.

Returns:

Scaled dimension.

“””

if isinstance(dim, tf.Tensor):

return tf.cast((tf.to_float(dim) – 1.0)

scale + 1.0, dtype=tf.int32)

else:

return int((float(dim) – 1.0) scale + 1.0)

def multi_scale_logits(images,

model_options,

image_pyramid,

weight_decay=0.0001,

is_training=False,

fine_tune_batch_norm=False):

“””Gets the logits for multi-scale inputs.

The returned logits are all downsampled (due to max-pooling layers)

for both training and evaluation.

更多查看:

https://github.com/tensorflow/models/tree/master/research/deeplab

基于docker的AI-Codereview-Gitlab部署实战

当用户在 GitLab 上提交代码(如 Merge Request 或 Push 操作)时,GitLab 将自动触发 webhook事件,调用本系统的接口。系统随后通过第三方大模型对代码进行审查,并将审查结果直接反馈到对应的 Merge Request 或 Commit 的Note 中,便于团队查看和处理。

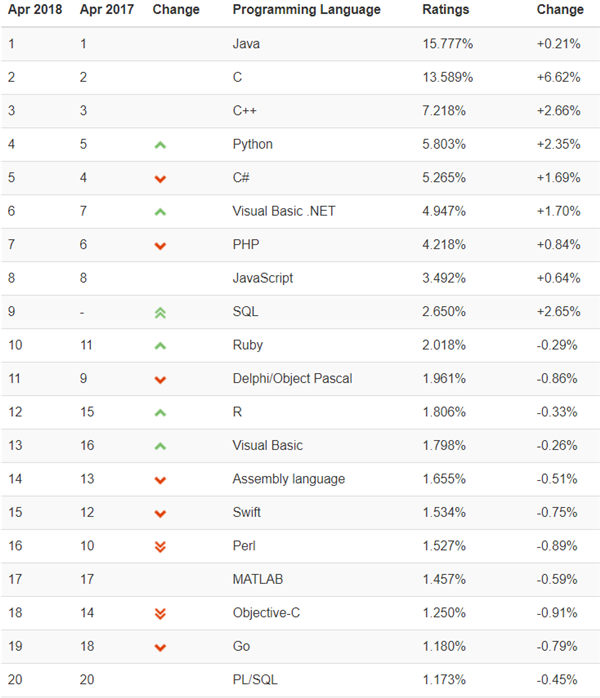

2018编程语言排行榜 Ruby杀回前十

在TIOBE发布的最新一期的编程语言排行榜中, C语言的涨幅最高,达到了6.66%,此外,Ruby也成功压下Perl,重回前十行列。

编程语言排行榜前20名如下:

目前,在前20的排行中,Objective-C和Perl下降幅度最大,都下滑了3个名次以上。

Objective-C下滑的原因主要是因为Swift的出现,从而导致Objective-C被苹果所抛弃。此外,App开发也在转向独立于平台的语言和框架,而Swift 只适用于Apple的系统,目前的生存环境也并不理想。

至于Perl,直到2005年,它还一直是最主要的脚本语言之一。在2008年,TIOBE曾预测Perl将会走向死亡,但被众多Perl的拥护者所否决。Stevan Little在2013年曾发表过一次演讲,名为“Perl没有死,它只是走进了一个死胡同”,其中就有提到一旦软件工程师放弃使用Perl语言,就不会再次选择使用它。

TIOBE表示,Perl语言一直止步不前,是广大开发者们寻找诸如Python和Ruby之类替代品的主要原因。直到今天,Perl社区仍然没有一个明确的未来,因此它还会继续走下坡路,如今只是苦苦挣扎罢了。

相关推荐:

Python微课:Python快速自学入门

值得一提的是,SQL在上上个月被重新添加到了TIOBE排行榜中,这个月排在第九

SQL自学推荐:SQL微课

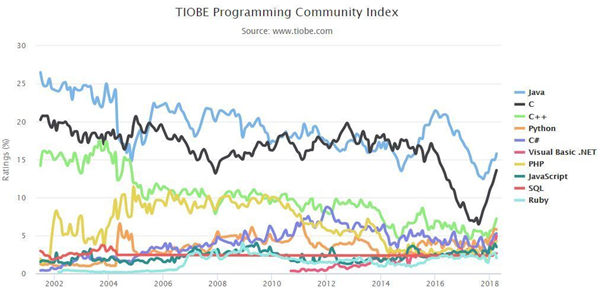

Top 10 编程语言 TIOBE 指数走势(2002-2018)

编程语言“名人榜”( 2003-2017)

“年度编程语言”获奖名单如下图所示,该奖项授予一年中评分最高的编程语言:

用AI开发AI翻译助手:初学者也能轻松做出第一个应用

我最近完成了一个小项目:一个由AI驱动的翻译助手 ——

LinguaLens。最有趣的是,我基本上是

在AI的帮助下完成整个开发过程的。这个经历让我想和更多对编程感兴趣、但还没有迈出第一步的朋友们分享:

现在是学习编程最好的时代,而AI正是你最值得信赖的学习伙伴。

Java的”伪泛型”变”真泛型”后,会对性能有帮助吗?

泛型擦除本质上就是擦除与泛型相关的一切信息,例如参数化类型、类型变量等,Javac还将在需要时进行类型检查及强制类型转换,甚至在必要时会合成桥方法。

小狮博客

小狮博客